At the Mayfield May 2018 CIO Insight Forum, we were joined by Ben Golub, whom we recently welcomed to Mayfield as a venture advisor, and who led Docker as CEO for the last 5 years successfully driving huge market adoption.

Mayfield hosts CIO Insight Forums on a regular basis to dive into emerging topics around the transformation of the IT landscape with insightful thought leaders to help drive the discussion. We intend for these forums to be an exchange of ideas for CIOs, whereby best practices and real IT issues can be discussed openly.

One of the recurring conversations we’ve been having with our CIO Network is the demand to drive technology adoption at ever-increasing speeds. We are witnessing a rapid evolution of IT infrastructure, driven in large part by innovative advancements like Docker containers and microservices. Ben shared his insights on the evolution of IT, the impact containerization has had on software and organizations, and where we’re headed next. He also provided key predictions for 2018 and beyond.

As CEO of Docker from 2013 to 2017, Ben helped oversee a revolution in how software is developed, deployed, and managed across the largest companies in the world. Ben has also served as CEO of Gluster and Plaxo, was a top executive at Verisign, and is currently serving as executive chairman and interim CEO of Storj Labs, a pioneer in the emerging field of distributed cloud storage, and as a board member at Venafi.

Here’s a Summary of His Top Insights

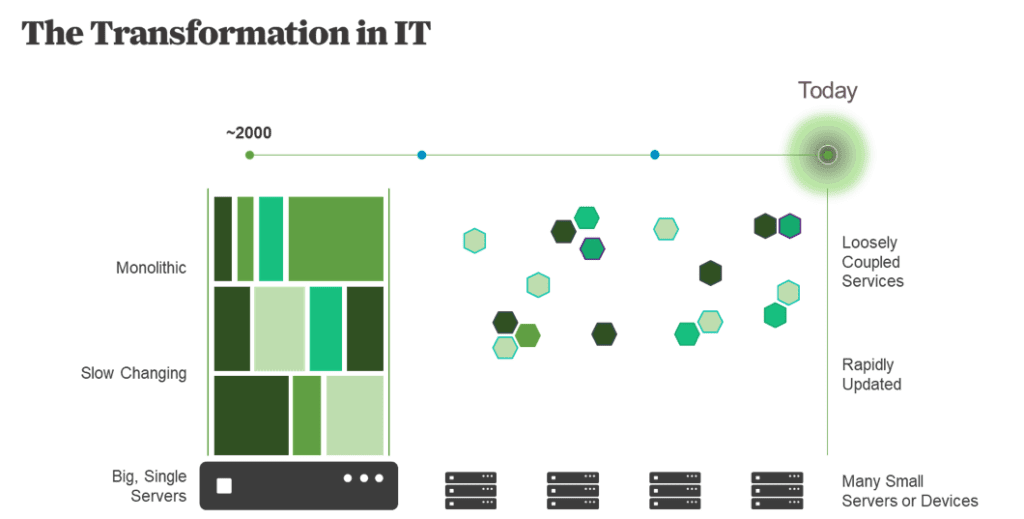

- Monolithic → Components: Over the last 20 years IT has steadily evolved from large monolithic applications running on large, monolithic systems to increasingly smaller, interchangeable components running on distributed, interchangeable devices.

- Containerized Development: Containers represent a fundamental shift in how we approach software development and deployment.

- Application-Centric Enabler: Containerization is key to the adoption of application-centric infrastructure, DevOps, microservices and automation.

- Consolidation Enabler: Data center consolidation, hybrid clouds, IoT, and big data are all enabled by containerization.

- Bimodal IT is a Myth: Containers can also be used to more effectively manage and migrate large legacy applications, enabling them to run on modern infrastructure, and enabling a gradual transformation into smaller, more nimble services.

- Supply Chain Model: Taking a supply chain approach to software can make applications more efficient and more secure.

- Organizational Efficiency: Taking the same microservices approach to how companies organize their teams can make them much more nimble and efficient.

- Internet Scale: We are rapidly moving toward a world of decentralized apps that can operate at internet scale.

Ben Golub on How Containers Are Changing IT

I’ve spent nearly my entire life in Silicon Valley. I grew up in Cupertino; my first job was cutting apricots at an orchard that was later paved over and became the site of Apple’s headquarters.

That was in 1982. I’m now on my seventh startup. Obviously, we’ve all come a long way since then. And the one pattern I’ve consistently seen throughout my time in Silicon Valley is the trend toward distribution and decentralization.

Over the last 20 years we’ve gone from big expensive servers running monolithic applications, which were highly dependent and rarely changed, to increasingly smaller pieces of interchangeable infrastructure that are updated rapidly and continually.

From Chaos to Order

But when lots of things that are changing rapidly interact with lots of other things that changing rapidly, these interactions can result in unintended consequences. Containers help solve this problem by providing a consistent interface.

Containerization Explained

A good way to think about containerization is to remember how we used to ship physical objects. In the old days, everything was in a different kind of package. Coffee beans were in bags, car parts in barrels. It took a whole team of dock workers to unload every item. And if bananas and monkeys were being shipped in the same cargo hold, they might interact in a way that wouldn’t be good for the bananas.

Then the shipping container came along. No matter what’s inside, the container is always the same size and shape, with hooks and holes in the same places. That means it can be easily stacked and moved with a crane from the ship to a truck or a train.

Shipping containers revolutionized cargo transport. Docker containers do the same thing with code.

Any library, application, or service can be placed inside a digital container that also “looks the same on the outside.” Every container starts, stops, logs, and does everything else in a consistent way. Using containers, your code can move easily from a developer’s laptop to the data center, across a cluster, to the Amazon or Azure cloud, and back.

However, the process isn’t always cut and dry. One of our CXOs noted that “the organization of microservices is fantastic, but in the orgs where it really succeeds, you have to have a super-duper person who looks at the big picture” and says “this is how the different microservices will all work together…so the CIO has to empower a truly smart architect to come up with these APIs…where the microservices talk to each other.” Like with any new architecture, the experiences a company has will be shaped by the implementation and those who are driving it.

Containerization Goes Mainstream

According to Deloitte, containerization boosts infrastructure utilization from 5X to 20X by reducing the need for hardware and VM licenses. With containers, software development becomes more flexible and predictable. Even humans grow more efficient; your DevOps people get to work on new problems instead of fixing old ones. And your environment becomes more resilient, because you have fine-grained knowledge of what’s been deployed, where it’s running, and how it is behaving. Equally importantly, it is possible to quickly fix and replace problematic containers without adversely affecting the rest of the environment in unexpected ways.

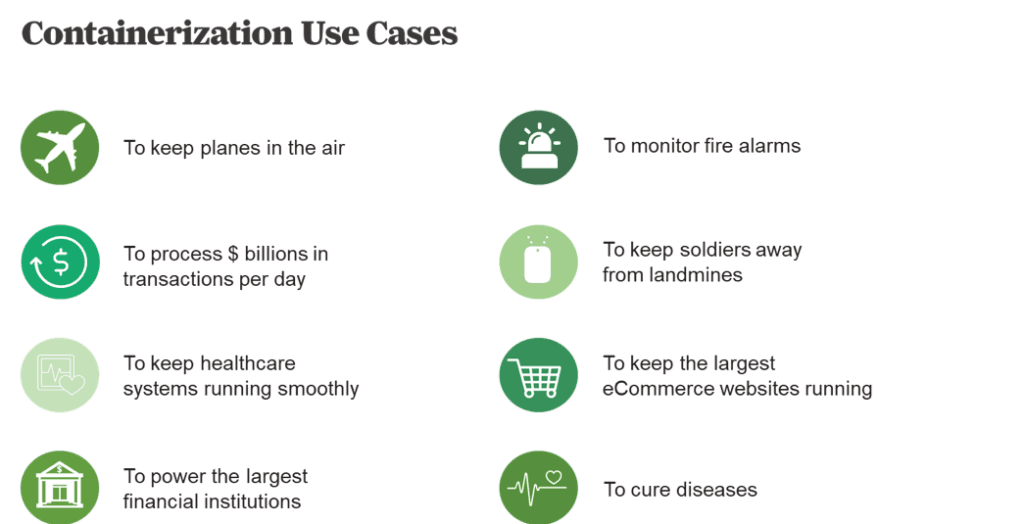

These are major reasons why containers have gone mainstream so quickly, and not just for low-risk applications at internet startups. As of the end of last year, there were more than 33 billion containers in use, a number that’s probably grown 50 percent since then.

You’ll find containers used for mission-critical apps at Fortune 100 firms like AT&T, Verizon, and Goldman Sachs, healthcare firms like Amgen and Merck, insurance giants like Anthem and MetLife, and across a wide swath of technology and public-sector organizations.

Five Key Trends for Containers in 2018

The Docker team made some insightful predictions of the trends that they believed were important in 2018. These were known as Five Bold Predictions for Containers. We wanted to include these predictions as additional content in this CIO Insights posting, as some are likely to being realized already:

- Major security breaches will be foiled. Read-only containers reduce the attack surface available to hackers, even when running code that contains known vulnerabilities.

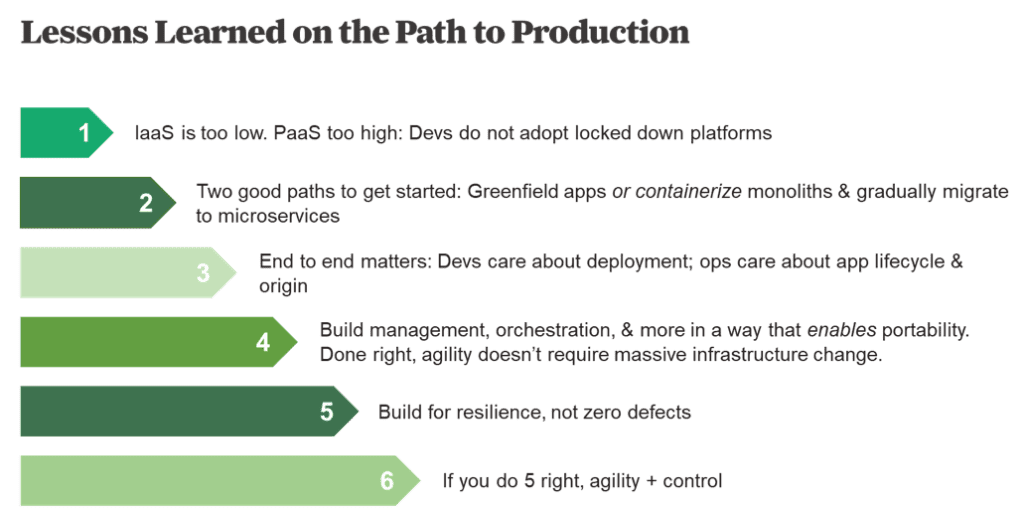

- Platform-as-a-Service adoption will decline. Cumbersome PaaS frameworks will be replaced by Container-as-a-Service offerings, offering greater agility, innovation, and efficiency.

- Enterprise IT will spend less on maintenance and more on innovation. Containers will allow CIOs to spend more than 20 percent of their budgets on new initiatives by unlocking ways to modernize and manage legacy apps.

- Security will replace orchestration as the next big challenge. With multiple ways to orchestrate containers, enterprises will begin to focus on segmenting and isolating threats by establishing ‘container boundaries.’

- Containers will accelerate digital transformation. By containerizing code, enterprises will experience faster app delivery, greater portability, hardened security, and more, allowing them to gain a competitive advantage.

Transformation Best Practices

Adopting containers is a necessary first step. But true IT transformation comes when you’ve built a software supply chain that manages the creation, provenance, performance, placement, and orchestration of tens of thousands of containers running in tens of thousands of places, over the course of the enterprise life cycle. Even traditional apps that run on old server infrastructure can be split into services, containerized, and managed in a more modern way.

But when you have lots of applications constantly updating and running in lots of places, building security into the supply chain is vital. You need to know whether these apps should be running in the first place.

Done correctly, you’re automating not only the production of code, but also its testing and deployment, whether it’s your code or from a third party. The fact that all the major vendors are now publishing in this format, signing and scanning their code before publishing, makes this very powerful.

New Term: ‘Organizational Microservices’

A change of this magnitude can appear daunting to large organizations that rely on hundreds of legacy applications. While containerization is revolutionary, with the right partner and approach, adoption can happen in an evolutionary way. Many enterprises start by taking a traditional app that’s causing a lot of pain, containerizing it, and moving it from one place to another. After a couple of weeks, when the benefits become clear, this can become a catalyst for broader change across the organization.

Some hands-on examples from our CXO network suggested that when “building a new framework…it requires substantial bandwidth for training and hand-holding.” One member of our CXO network recommended that companies “start with a small team of ‘experimentation’ for a small scope…and then slowly scale.”

However, taking these first steps brings changes that can be broadly impactful within the broader organization. Inside the Fortune 3000 you’ve got large teams that are dependent upon other large teams to get their jobs done. Even when you have good people on both sides, it can be impossible to get decisions made; when one team makes a decision on its own, it can break something else in the organization.

Just as microservices implies small teams of people writing code with well-defined interfaces, you can also have small cross-functional teams that include developers, product managers, marketing, sales, and support — a concept people are calling ‘organizational microservices’.

Each team would have a well-defined area of responsibility but with total autonomy over that area. They’d be empowered to make decisions and to live by the consequences of those decisions. They would optimize for agility and being able to quickly recover if they made a mistake. Because each area of responsibility would be small, the impact of bad decisions would be much less catastrophic.

You’d need the organizational equivalent of containerization, orchestration, and lifecycle management, whereby you have consistent policies for making decisions, consistent places for publishing those decisions, and well-documented internal systems that tell you who’s in charge of what and whether they’re achieving their objectives. Just as you don’t have to adopt microservices whole hog; you can adopt organizational microservices in a small way with a department or a business unit. Aligning teams around a technical microservices project (e.g. one team per microservice) would be a good opportunity to start experimenting.

Cultural changes like this are always the hardest. They need to both top down and grass roots support. Demonstrating early wins with small teams is a key way to achieve both.

The Road Ahead

We are rapidly evolving toward a world of decentralized applications, with no single authority or point of failure; a world that’s transparent, secure, and can operate at Internet scale. Containerization is only the beginning of this journey.