In every gold rush, the miners get the headlines. But the enduring fortunes are built by the people selling the picks, shovels, railroads, and power systems.

We are living through the AI gold rush.

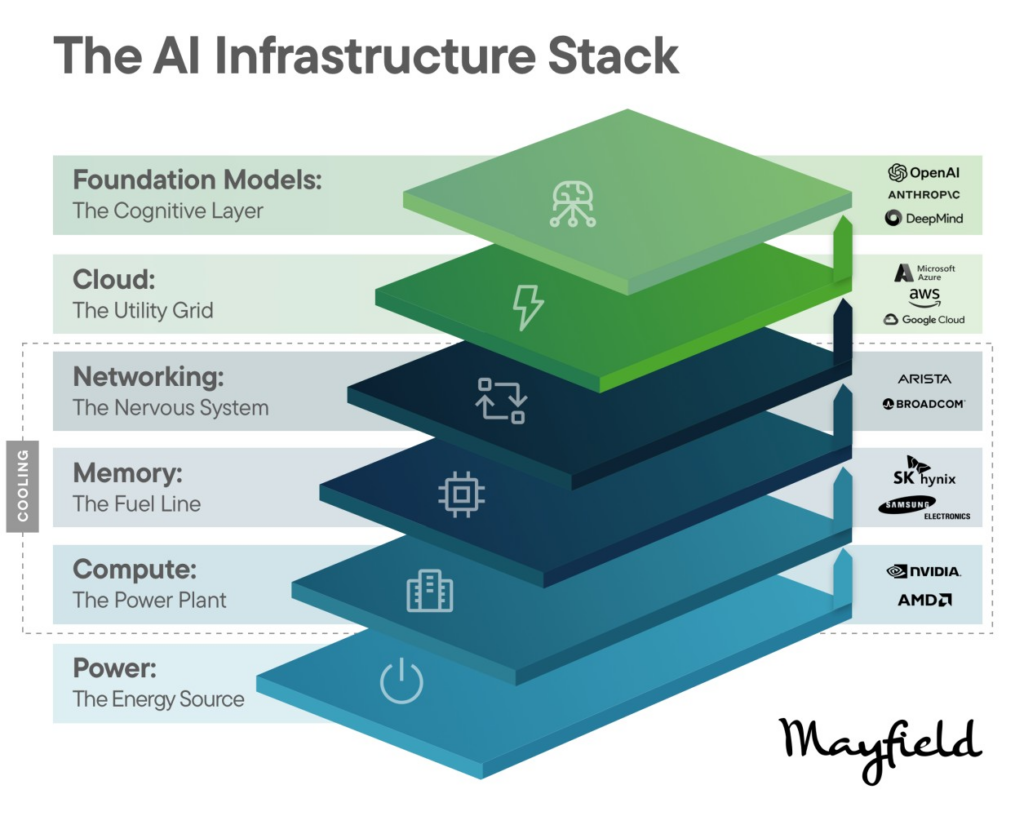

While the world debates which AI app will win, the biggest market value creation so far has accrued to the AI infrastructure layer: NVIDIA and AMD on compute, Micron on memory, Broadcom and Arista on networking, Microsoft, Amazon, and Google on cloud, and OpenAI, Anthropic, and Google DeepMind on foundation models.

AI is demanding a full-stack infrastructure reset of the digital economy.

AI is like electrification. Before appliances flourished, the grid had to be built. Before the grid, someone had to solve generators, transformers, cooling systems, and transmission lines. That’s where we are now.

Modern AI requires:

None of the above is incremental. It’s rebuilding the digital economy’s infrastructure from the ground up.

Look at the stack. Each layer tells the same story.

Compute is the power plant. NVIDIA and AMD supply the core energy source of AI. Compute was the first constraint, and the first place market value exploded. When something is scarce, capital-intensive, and performance-differentiated, it commands a big premium.

Memory is the fuel line. AI systems are memory-hungry, and high-bandwidth memory is no longer a commodity. Without memory throughput, compute stalls. Micron, SK Hynix, and Samsung Electronics are sitting on a strategic bottleneck.

Networking is the nervous system. AI clusters behave like distributed supercomputers, with tens of thousands of GPUs communicating in real time. Networking performance directly affects training speed, reliability, and cost. Arista and Broadcom sit at the center of that.

Cloud is the utility grid. Most enterprises will never build their own AI data centers. They’ll consume AI through the cloud. Microsoft Azure, AWS, and Google Cloud are becoming the utilities of the AI era.

Foundation models are the cognitive layer. OpenAI, Anthropic, and DeepMind are becoming the operating systems for intelligence. Developers standardize on their APIs, enterprises embed them into workflows, and ecosystems form. Once embedded, switching costs rise fast.

Three structural forces explain why value concentrates at the infrastructure layer first.

Everyone sees the GPUs. Fewer people see the secondary infrastructure boom forming underneath them.

As AI scales, the bottlenecks are shifting from chips to physics. AI data centers are becoming constrained by power availability, cooling efficiency, interconnect bandwidth, energy density, and grid stability. Every new bottleneck creates a new wave of companies.

As clusters scale to tens of thousands of GPUs, entirely new categories open up:

Bottom line: This wave of opportunity looks like advanced engineering, deep tech, hardware and software integration, energy systems, memory, and photonics companies. The AI gold rush is not just digital. It is physical.

The consolidation of the AI infrastructure layer isn’t a threat. It’s a signal.

Concentrated infrastructure creates a stable platform for massive application innovation. Every great platform shift works this way: infrastructure consolidates first, then entrepreneurial creativity explodes on top of it. We are still early. The stack is forming. The workflows are not yet reinvented.

If you’re building today:

During the California Gold Rush, miners searched for gold. Levi Strauss sold durable goods. Railroads transported supplies. Banks financed expansion. The steady fortunes were built by those who enabled the ecosystem.

Today, NVIDIA supplies the compute. The hyperscalers operate the distribution layer. Foundation models supply the intelligence. And a new generation of startups is emerging to solve the power, cooling, and connectivity constraints that scale creates.

The first wave of value accrues to those controlling the AI infrastructure layer. The second wave will accrue to those solving the physical constraints of scaling it.