This article is part of our Future of AI series from Imagination in Action 2025 Silicon Valley Summit — where founders, leaders, and investors explored what’s next for AI. Explore the magazine.

Somewhere amid this AI explosion, there is a rather glaring problem the industry needs to solve: How to get enough power in order to actually leverage AI to its full potential.

That’s the billion-dollar question for AI stakeholders right now.

AI data centers’ rapid growth is creating a crisis in the American energy system. But at the same time, the availability of electric power is now one of the chief obstacles to AI growth. Addressing it will be crucial to the continued stability of both the grid and the AI industry.

Many possible solutions are on the table, including more flexible data centers, more efficient chips, using AI for grid management and alternative energy sources such as nuclear power. AI companies including Oracle, Microsoft, and Amazon have even considered powering data centers with their own small modular reactors

If companies want to scale their computation and win the AI race, they will have to figure out how to build the right energy infrastructure.

Using AI to optimize AI

Unsurprisingly, AI itself is being used as a tool to manage energy costs. It’s a bit like using “Google Maps for electrons” to utilize the grid more intelligently. In 2019, Google’s DeepMind used AI to more accurately predict wind power output 36 hours in advance, increasing the value of its wind power by over 20%.

Tamanna Sait, VP of cloud engineering at Crusoe, aims to build more flexible data centers that can minimize environmental impact and avoid consuming power. Crusoe is currently building a data center in Abilene, Texas, that uses diverse renewable energy sources, including wind, solar, geothermal and hydroelectric power.

“We are using the abundant West Texas wind to generate power, and natural gas turbines to generate the backup power.”

Tamanna Sait, Crusoe

By using AI to manage them, the data center can forecast the supply and demand, predicting its own energy needs hours in advance and balancing different power sources to meet the need.

“We are using the abundant West Texas wind to generate power, and natural gas turbines to generate the backup power,” Sait said.

To understand the stakes, consider this: AI servers in the U.S. gobbled up more than 40 terawatt-hours in 2023, a huge increase from 2017. As AI scales, its power consumption will escalate, with one estimate suggesting data centers could consume up to 945 terawatt-hours globally by 2030. That amount is comparable to Japan’s entire annual electricity output.

Revamping performance, chips, and cooling

High-performance chips generate tremendous heat, requiring extensive cooling infrastructure that adds another layer of energy consumption.

Companies are working with enterprises to use AI to optimize those cooling systems and other aspects of a data center’s operation. For instance, Bay Compute’s AI can help find where a data center is wasting energy, helping the operator to cut costs by staggering heavy usage, said Vijay N. Gadepally, cofounder of Bay Compute.

He said customers already see results in performance improving, comparable to the way households can save money and avoid problems by staggering their appliance usage.

“It’d be like having a single 100 amp breaker in your house, and you’re charging your car, plus turning on the dryer, plus your refrigerator, plus running the air conditioner—all at the exact same time. Of course, you’re going to run into a problem,” Gadepally said.

“But if you can stagger them and move usage around, you can do a lot more with that single breaker.”

Efficiency matters more and more. Chip manufacturers will increasingly be measured on performance per watt rather than performance per dollar, said Amin Vahdat, VP of AI and infrastructure at Google.

Google is also investing in methods like liquid cooling and analyzing performance metrics as energy initiatives grow.

Other companies are working on specialized chips that are more power-efficient than the GPUs currently used for AI workloads.

“Chip manufacturers will increasingly be measured on performance per watt.”

Amin Vahdat, Google

From a chip perspective, Auradine CEO Rajiv Khemani is taking what they initially built for blockchain and Bitcoin to make AI energy use more efficient. The company’s technology for achieving low-power computation takes advantage of the V-squared effect, where power consumption is a function of voltage squared. Auradine runs chips at “near threshold voltage,” or below 0.3 volts, compared to typical AI chips that run at 0.75 volts, per Khemani. That greatly reduces their power consumption.

Costs and infrastructure continue to evolve

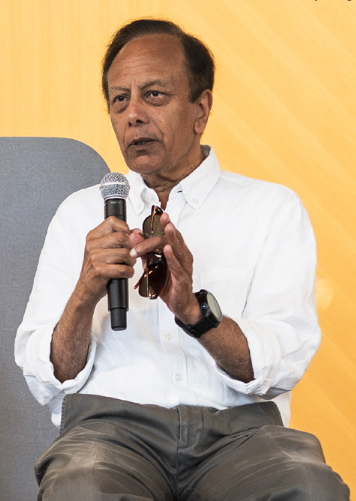

Pradeep Sindhu, founder, chairman and CTO of Juniper Networks, referred to a “golden era of silicon hardware” that will drive more vertical integration as AI grows.

The winners will be companies where teams understand algorithms, software layers, silicon design, and networking—then integrate all of it into cohesive systems. That kind of full-stack thinking is rare but increasingly essential, Sindhu said.

The business models undergirding the AI ecosystem will transform as energy economics shift. Data centers, cloud providers, GPU manufacturers: All will need to adapt their pricing, service offerings, and strategic positioning.

Expect relentless innovation across the entire infrastructure stack as hardware and software teams work to unlock new efficiencies. The companies that crack the code on sustainable, scalable power will capture outsized value.

Balancing power supply and demand is essential for AI to scale into robotics, life sciences, and enterprise applications. Without sustainable energy solutions, the promise of AI could be limited by its own hunger for power.

Founder Takeaways

Explore The Future of AI | This article is part of our Future of AI series from Imagination in Action 2025 Silicon Valley Summit — where founders, leaders, and investors explored the next revolution of AI. We explored how AI is changing scientific research, creating new startup economics, straining power grids, and challenging us to rethink everything from enterprise software to regulatory frameworks. Dive into the Future of AI magazine to see the full picture.